I’ve always had a stone in my shoe about being a college dropout. As such, I never imagined I’d be walking into Harvard Law School as anything other than someone who needed directions to MIT. I’m an engineer. I build systems. I’ve spent my career building things that work — reliably, predictably, and without prayer-based assumptions about what’s happening underneath.

Five weeks into retirement, I realized I’m constitutionally incapable of watching the most important conversation in the world go sideways without doing something about it.

Here’s why I couldn’t stay on the sidelines. AI is, by far, the most important conversation society is having right now — and (if you’re not up on current events) it’s not going well. Not even close. The stakes are existential in both directions: get it right and we solve problems that have plagued humanity for centuries; get it wrong and the consequences could be irreversible. And here’s the thing that keeps me up at night — with AI, 90+% of the paths to Utopia and Dystopia are the same. That’s not a reason to stop. That’s the reason why we need to lean into this crucial conversation.

People who work with me know I have a well-deserved reputation for starting the conversations everyone else is avoiding, and doing it as an honest broker — with brutal honesty and forthrightness, even when it’s uncomfortable. Especially when it’s uncomfortable. So here I am.

I have a hypothesis on what’s broken, and it was inspired by Rene Descartes’ Discourse on Method — the idea that before you can solve anything, you need a clear, shared method for reasoning about it in the first place.

The AI policy conversation isn’t failing because people disagree. It’s failing because they don’t have the infrastructure to disagree productively.

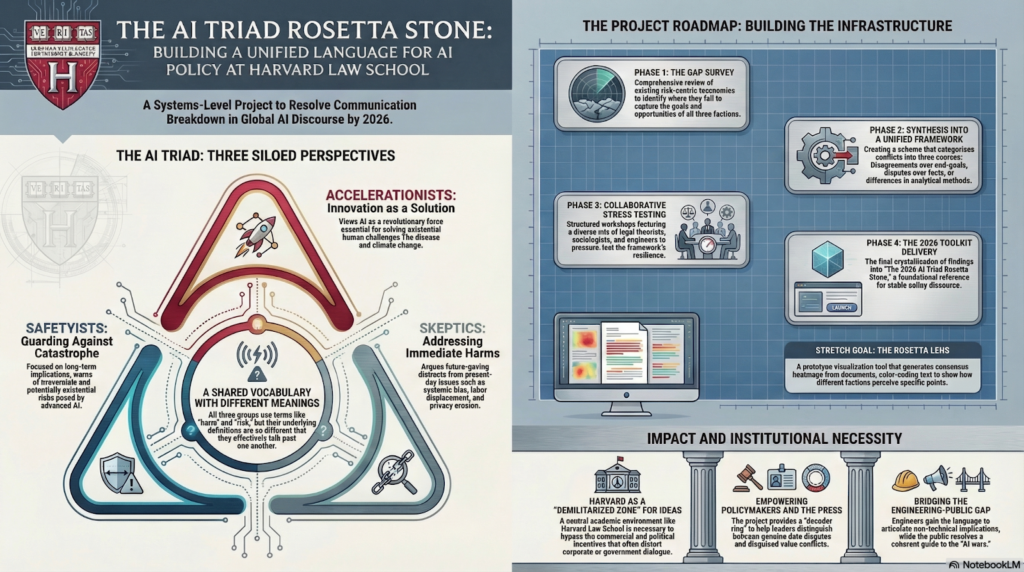

Three communities — Accelerationists, Safetyists, and Skeptics — are locked in simultaneous monologues. They use the exact same vocabulary to mean completely different things. “Risk.” “Harm.” “Safety.” No shared schema, no shared language, no way to even identify whether they’re fighting about facts or values or incentives. The grit of reality is that you can’t resolve a conflict you can’t categorize.

When three groups can’t agree on what the words mean, you don’t have a policy debate. You have a boof-a-rama.

So I’m going to do what engineers do: build the infrastructure that’s missing.

Today I start a Fellowship at Harvard Law School, at the Berkman Klein Center for Internet & Society, working on what I’m calling the AI Triad Rosetta Stone.

Here are the details:

The AI Triad Rosetta Stone

A Framework for Civic Discourse Leading to Durable Policy Frameworks

I. Executive Summary: A Systems-Architecture Perspective

The current AI landscape is characterized by a Triad of conflicting perspectives—Accelerationists, Safetyists, and Skeptics—who are locked in simultaneous monologues without a shared set of facts or language. This project proposes a Rosetta Stone translation layer to map these distinct moral vocabularies onto a single, unified schema. By treating socio-technical friction as a system error, this work aims to provide policymakers, the press, and engineers with the diagnostic infrastructure necessary to distinguish between value conflicts and factual disagreements.

II. The Problem: A Systems-Level Communication Failure

AI policy conversation has fractured into three siloed communities that frequently talk past one another:

- Accelerationists: View AI as a revolutionary force for solving existential challenges like climate change and disease.

- Safetyists: Warn of catastrophic, irreversible, and potentially existential risks.

- Skeptics: Argue that future-gazing distracts from immediate harms like systemic bias, labor displacement, and privacy erosion.

These groups use the same vocabulary—such as harm and risk—to mean entirely different things. Without a shared language, they are unable to recognize areas of agreement or disagreement. This project treats this friction as a dilemma involving trade-offs rather than a problem with a single solution, seeking to build the infrastructure that allows these perspectives to coexist without system collapse.

III. The Proposal: Building Conceptual Infrastructure

This project applies engineering principles like system resilience and root-cause analysis to the ecosystem of public debate. The goal is not to engineer a single solution, but to engineer the table at which solutions can be negotiated.

Phase 1: Survey

Existing taxonomies are primarily risk-centric and often fail to capture the goals and opportunities identified by Accelerationists. This phase involves a comprehensive survey of current frameworks to identify these gaps.

Phase 2: Synthesis into a Unified Framework

This phase integrates inputs into a unified conceptual framework based on the principle that conflicts arise from three distinct sources:

- Goals/Values/Incentives: Differences in the desired end-state.

- Data/Facts: Disagreements over what is objectively true.

- Method: Differing interpretations of how to analyze data.

Phase 3: Collaborative Stress Testing

The framework will be evaluated through structured workshops with intentionally diverse attendees, including experts in legal theory, sociology, and technical engineering.

Phase 4: Final Toolkit

Findings will be crystallized into The 2026 AI Triad Rosetta Stone: A Toolkit for Policy Discourse, a foundational document for stable reference.

Stretch Goal: Rosetta Lens A prototype visualization tool that ingests documents and generates a consensus heatmap, colorizing text based on alignment and allowing users to see how different factions view specific points.

IV. Intended Impact and Audiences

The project aims to move the conversation from three simultaneous monologues to a structured dialogue.

- Policymakers: Acquire a diagnostic tool to determine if a disagreement is rooted in values, facts, or economic incentives, allowing for more targeted policy responses.

- The Press: Gain a decoder ring to identify when sources are disguising value conflicts as data disputes.

- Engineers: Receive a language to articulate the non-technical implications of their technical work.

- The Public: Obtain a coherent decoder to understand the actual stakes of the AI wars.

V. Institutional Necessity

This work requires a demilitarized zone for ideas, as commercial and political incentives within corporations or government agencies can distort dialogue. A neutral academic environment is necessary to serve as a trusted forum where opposing camps can engage in difficult conversations and pressure-test the framework against diverse research

This is a fantastic pursuit for your “retirement.” Thank you!!

Thanks Judith!

Fascinating. I most eagerly look forward to what this, and you, have in store.

I work with frontline field service workers every day who are genuinely frightened that AI is going to take their jobs. I watch neighbors and friends show up to city council meetings and zoning board hearings scared, angry, and often badly misinformed. And here’s the irony that keeps me up at night: many of those same people are organizing their “No AI” communities on Facebook, streaming music on Spotify, and shopping on Amazon — all of which run on AI, and all of which are fueling the exact data center demand they’re protesting down the street.

That’s not hypocrisy. That’s a comprehension gap. And frameworks like Jeffrey’s are exactly the infrastructure we need to close it.

This is also proof that expertise isn’t a credential — it’s a body of work. Harvard didn’t make Jeffrey Snover a scholar. Thirty years of building real systems in the real world did. Harvard is just finally paying attention.

I’m interested in following along, how might I stay up to date?

I’ll be posting here and on LinkedIn.

Having seen the way progress is made within SDOs, I would love to understand how this would differ and avoid some of the conflicts faced in those forums leading to current inability of standards to keep pace with the rate of acceleration in the technology.

Victor – SDO == ?